|

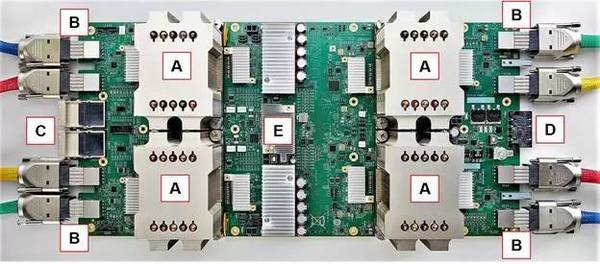

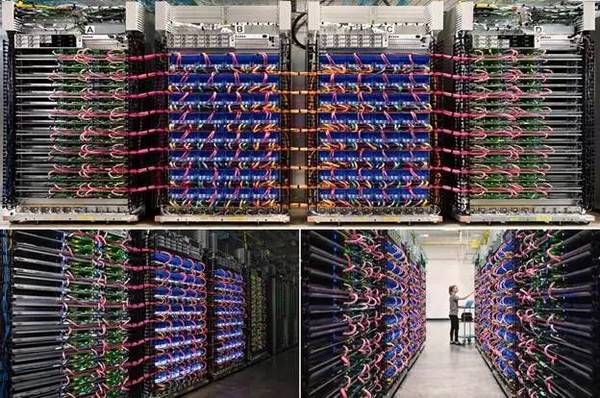

也就是说TPU2和GPU相比,它更像是coprocessor,需要更多的依赖CPU这样的通用处理器。这也是Google的Trade-off之一。从这篇文章还可以看出,Goolge在Data center领域的经验,让它可以用很多板级设计和系统级设计优化来弥补芯片设计能力的欠缺。 另一方面,硬件架构是取得竞争优势的门槛吗? 还是那句话,体系结构的研究已经很成熟了,创新很难,想做别人做不了的东西基本不可能。Nvidia最新的GPU中,增加了Tensor Core(),而在面向自动驾驶的Xavier SoC中,设计了专门的硬件加速器DLA(Deep Learning Accelerator)()。Google TPU2中为了同时实现training(第一代TPU只支持inference),增加了对浮点数的支持。虽然目前看不到细节,但可以猜想它的架构也相对第一代TPU的简单的脉动阵列()做了很大改进。可以看出,在口水战的同时,他们也在相互借鉴对方的优势。而对于一个成熟团队来说,硬件架构上的改进并不是很大的困难。更大的风险在于硬件架构的改动对软硬件生态的影响(又是一个trad-off)。 “Under The Hood Of Google’s TPU2 Machine Learning Clusters”,这篇文章最后这样说: “There is not enough information yet about Google’s TPU2 stamp behavior to reliably compare it to merchant accelerator products like Nvidia’s new “Volta” generation. The architectures are simply too different to compare without benchmarking both architectures on the same task. Comparing peak FP16 performance is like comparing the performance of two PCs with different processor, memory, storage, and graphics options based solely on the frequency of the processor.” “That said, we believe the real contest is not at the chip level. The challenge is scaling out compute accelerators to exascale proportions. Nvidia is taking its first steps with NVLink and pursuing greater accelerator independence from the processor. Nvidia is growing its software infrastructure and workload base up from single GPUs to clusters of GPUs.” “Google chose to scale out its original TPU as a coprocessor directly linked to a processor. The TPU2 can also scale out as a direct 2:1 accelerator for processors. However, the TPU2 hyper-mesh programming model doesn’t appear to have a workload that can scale well. Yet. Google is looking for third-party help to find workloads that scale with TPU2 architecture.” 对于Data center的training和inference系统来说,竞争已经不是在单一芯片的层面了,而是看能否扩展到exascale的问题(exaFLOPS,10的18次方)。而和TPU2的同时发布TensorFlow Research Cloud (TFRC),对于发展TPU2的应用和生态,才是更为关键的动作。大家可以顺便看看这次Google展示的板级和机架的照片。

对于一个AI芯片项目来说,考虑整个软硬件生态,要比底层硬件架构的设计重要的多。最终给用户提供一个好用的解决方案,才是王道。 (责任编辑:本港台直播) |